The Mighty LLM Match Up - Part 2

We analyzed the pros, cons and key differences between ChatGPT, Gemini, Claude, Llama and Falcon. Your complete guide to choosing the right LLM.

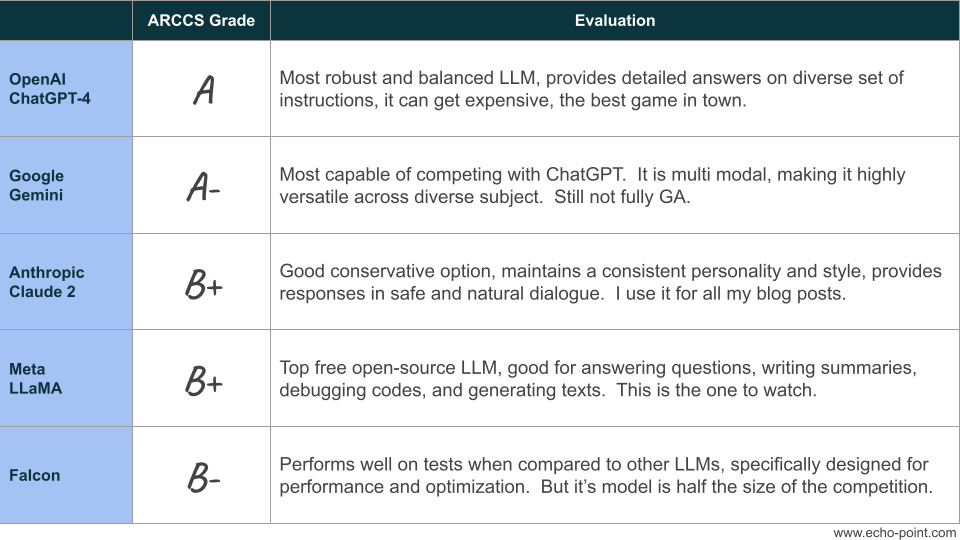

Late last year, I evaluated the top 5 LLMs across the 5 key dimensions - Accuracy, Reliability, Customization, Costs, and Safety (ARCCS framework).

Here is how they ranked (full article):

Today we are going to dive deeper into each of the LLMs.

Specifically evaluating each model,

Pros

Cons, and

Bottom Line

Note: Since my last article, Google released Gemini. I have replaced PLaM with Gemini for this analysis.

ChatGPT-4

ARCCS Grade: A

Summary:

ChatGPT-4 is the most popular LLM currently available. It can deliver highly accurate and detailed responses to a diverse range of prompts thanks to extensive training data. It also rates highly in reliability, with proven performance in speed, scalability, and ease of use. As well as safety and security, especially against bias and misuse, against other LLMs. However, it is never going to be perfect.

Pros:

Most popular LLM available, default choice for most organizations

Capable of generating responses up to 25,000 words

Can provide detailed instructions for even the most complex tasks

Allows you to fine-tune a dataset to get tailored results that match your needs

Cons:

Can get expensive based on the type of tasks

Suffering growing pains in speed and availability, which the company is aggressively addressing

Bottom Line:

ChatGPT-4 is the most robust LLM for its balance of advanced natural language processing in an easy-to-access package. While it is not the only game in town, its accuracy, reliability, and safeguards allow you to automate key business functions if configured properly. It is the go-to model as enterprises begin to experiment with LLMs.

Google Gemini

ARCCS Grade: A-

Summary:

Gemini is a powerful language model developed by Google, designed to provide accurate and detailed responses to a wide range of queries. It is a relatively new entrant in the field of large language models (LLMs) and is positioned as a strong competitor to OpenAI's ChatGPT. Gemini offers various editions, including Gemini Ultra, Gemini Pro, and Gemini Nano, each with different capabilities and availability.

Pros:

Advanced language model with various editions catering to different needs

Capable of handling complex tasks and providing accurate responses

Positioned as a strong competitor to existing LLMs like ChatGPT

Cons:

Lack of transparency regarding source data

Slower response times compared to some existing LLMs like ChatGPT

Limited availability. Access Nano through Pixel 8 and Pro through Bard (rebranded as Gemini).

Bottom Line:

Gemini is a promising addition to the landscape of large language models, offering advanced capabilities and various editions to cater to different user requirements. While it competes with established models like ChatGPT, it also faces criticism for its slower response times and lack of transparency. As it continues to evolve, Gemini has the potential to become a significant player in the field of natural language processing.

Claude 2:

ARCCS Grade: B+

Summary:

With a context window of 100K, Claude 2 is better suited for large data-heavy tasks, such as summarizing long essays or analyzing large amounts of data. It is also safer and more conservative in its responses, with a focus on natural, harmless dialogue.

Pros:

Safer, more cautious responses

Will clarify ambiguities and confess knowledge gaps

Naturally flowing conversations with larger memory

Clearer reasoning and common sense

More conservative, less prone to mistakes

Focus on natural, harmless dialogue

Cons:

More limited capabilities than ChatGPT-4

Lacks creative writing skills

Reluctant to provide certain information

Perspective adheres closely to guidelines

Conversations can feel restrictive

Bottom Line:

Overall, Claude 2 is a good choice for product leaders who need an AI assistant that is safe, conservative, and can handle large amounts of data. However, it may not be the best choice for tasks that require creative writing skills or a broader range of capabilities

Meta Llama 2

ARCCS Grade: B+

Summary:

Llama 2 is an open-source large language model created by Meta and is available for free for research and commercial use. It is trained on 2 trillion tokens and over 1 million human annotations. Llama 2 outperforms other open-source language models on many external benchmarks, including reasoning, coding, proficiency, and knowledge tests.

Pros:

Open-source and free for research and commercial use

Large range of pretrained and fine-tuned models

Outperforms other open-source language models on many benchmarks

Capable of handling large amounts of data

Supports common programming languages being used today, including Python, C++, Java, PHP, Typescript (Javascript), C#, and Bash

Cons:

Not primarily designed to be a chatbot

Limited information on its creative writing skills

Limited information on its ability to handle multimodal inputs

Limited information on its ability to detect sarcasm, mood, and subtle emotions

Bottom Line:

Overall, Llama 2 is a good choice for product leaders who need an open-source AI assistant that is capable of handling large amounts of data and supports common programming languages. However, it may not be the best choice for tasks that require creative writing skills or a broader range of capabilities.

Falcon

ARCCS Grade: B-

Summary:

Falcon 180B is a closed source LLM with with 180 billion parameters, trained on 3.5 trillion tokens. It performs well in various tasks like reasoning, coding, proficiency, and knowledge tests. Despite being a much smaller model, it ranks just behind GPT-4, and performs on par with PaLM 2.

Pros:

Fine-tuned on open assistant datasets

Capable of handling large amounts of data

Can be used for text-generation-inference

Cons:

Limited information on its creative writing skills and ability to handle multimodal inputs

Half the model of Chat GPT-4, Llama, and Bard

Limited information on its commercial terms of use and external developer contributions

Bottom Line:

Falcon LLM is a good choice for executives who need an AI assistant that is fine-tuned on open assistant datasets and can handle large amounts of data. However, since it is half the size of other models there may be issues with performance and accuracy.

Happy building!!